Unleashing enterprise productivity: The UiPath blueprint for AI at work

Share at:

Generative AI has ignited a surge in personal productivity, helping us do all sorts of tasks more efficiently. Yet, in the enterprise, this productivity has hit a wall. While business leaders are eager to deploy AI across their companies, a number of challenges, especially around trust, are giving them pause. In a Workday survey of more than 2,300 business executives, 43% of respondents were “concerned about the trustworthiness of AI and ML."

Fortunately, new developments are helping companies overcome these hurdles and paving the way for enterprise-wide productivity improvements.

These developments were discussed in detail during “The UiPath Blueprint for AI at Work” keynote at FORWARD VI. The keynote featured AI experts Professor David Barber, Director UCL Centre for Artificial Intelligence and UiPath Distinguished Scientist, Dr. Ed Challis, Head of AI Strategy at UiPath, and Luke Palamara, VP, AI Product Management at UiPath.

The experts had a fascinating conversation around the history of AI and what the latest advances mean for enterprise users.

The era of AI

Since AI has dominated headlines, many people believe it’s a completely new technology. However, researchers have actually been working on it for at least half a century. And, according to Barber, the “ambition has always been to make these systems do things like humans can do.”

To get closer to replicating human intelligence, researchers have, in recent decades, created artificial neural networks that mirror how our brains work.

The idea made sense but figuring out how to connect artificial neural networks “is a very complicated problem,” Barber said. He explained that “the amount of compute…and the amount of data you need to determine those connections is very, very difficult. So, while the field has been around a long time, it's only relatively recently that we've seen accelerated progress in the sense that these systems are becoming very, very useful.”

That accelerated progress came in 2012 with the widespread abundance of graphics processing units (GPUs). GPUs provided a much-needed uptick in processing power which has greatly accelerated the time it takes to train AI.

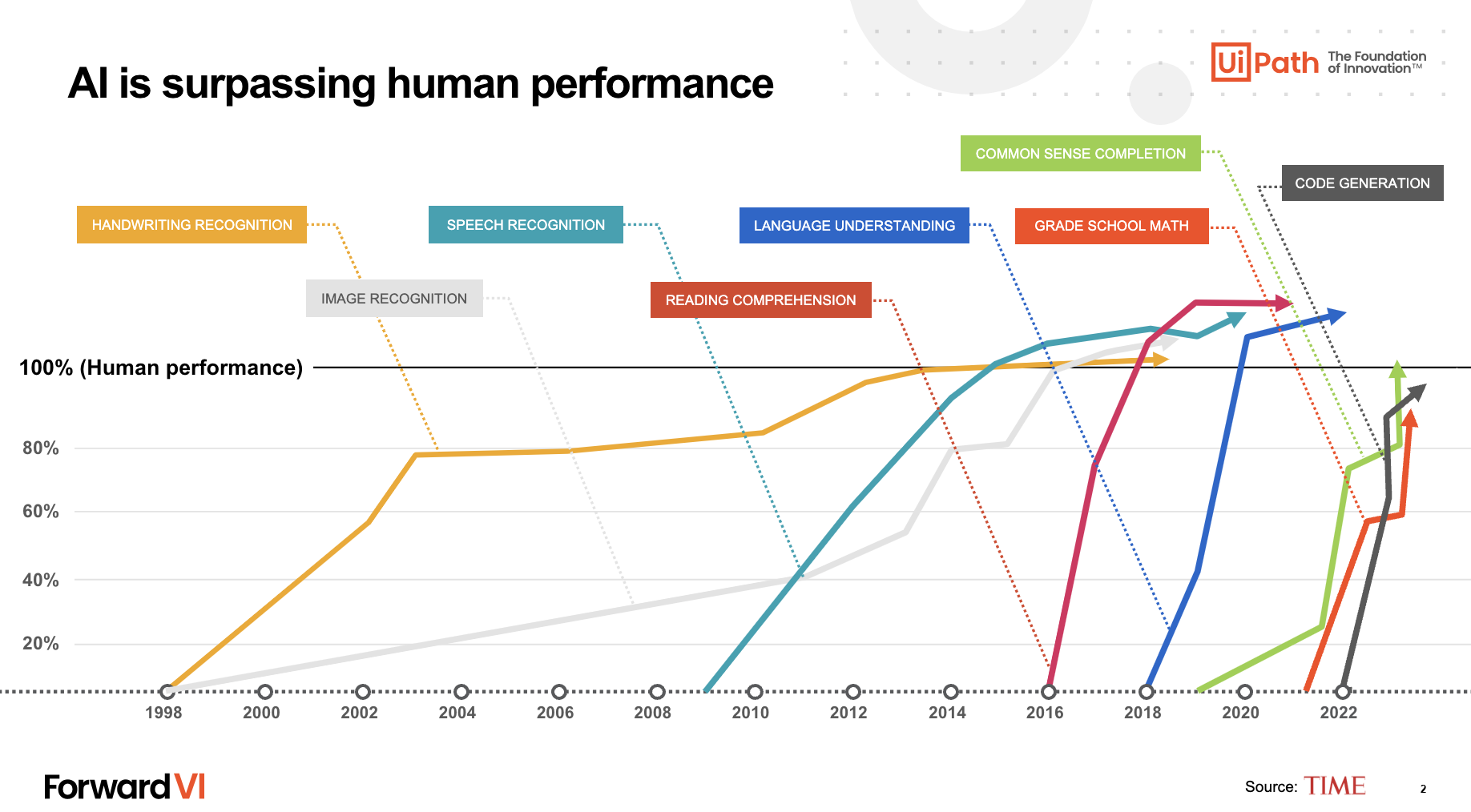

As the graph above shows, today’s GPU-powered AI beats the average human at recognizing objects, speech, and even reading comprehension. But these perceptual tasks are only half of what makes human intelligence so profound.

Making AI a contributor rather than just an advisor

As AI’s perceptual abilities ramped up, researchers began exploring its generative potential. In 2019, they had the bold idea to train an LLM on a huge chunk of the internet and ask an LLM to generate the next word in a sentence.

As we’ve seen with LLMs like ChatGPT, the outcomes have been astonishing. These systems don’t just generate pieces of code or text—given the vast linguistic data available online, they’ve acquired a depth of cultural understanding previously considered unattainable.

But while the creativity of these systems is amazing, they’re far from perfect.

The good thing is [LLMs] are…built like the human brain…so they're good at cognitive tasks. The bad thing is they're built like the human brain in the sense that they're also fallible.”

David Barber, Director UCL Centre for Artificial Intelligence and UiPath Distinguished Scientist

Getting a grasp on when we can trust AI, and when we can’t is a huge hurdle standing in the way of broader enterprise adoption (more on that shortly).

Current AI challenges

When it comes to today’s enterprise AI, “things are kind of bifurcated,” according to Palamara.

On one hand, you have Specialized AI—highly tailored models that are fast, inexpensive, and possess a detailed understanding of business data. They’re highly effective at specific tasks, like extracting information from communications and from documents, including invoices, bills, and receipts. However, they are less effective when faced with discrepancies outside of the examples they’re trained on.

Then you have LLMs, which underpin Generative AI. They’re immensely powerful, but mainly for individual tasks like summarizing information, analyzing data, etc. They have other limitations, too, such as providing inaccurate information.

To get AI to go beyond personal tasks and boost enterprise productivity, it needs to be more than an advisor—it needs to actually do work. How can we make that real?

According to Palamara, there are three critical ingredients.

Context

“AI is only as good as the information you give it,” Palamara said. Even if you have the best customer service agent in the world, they won’t be able to help customers if they don’t have knowledge of the company’s policies.

Within the UiPath Business Automation Platform, one of the ways this comes together is through UiPath Integration Service. It acts as a bridge between AI and relevant data sources, giving AI the context needed to get things done.

Action

Palamara asked the crowd, “what good is knowledge if you can’t act on it?” To be productive at an enterprise level, AI needs to do more than generate text and images. It needs to do things like move data between systems, respond to customers, and place orders automatically.

This is what UiPath is all about…it’s about putting AI to work. It’s about bringing this rich set of capabilities that allow AI to take action and not just be an advisor.”

Luke Palamara, VP, AI Product Management, UiPath

Trust

Many company leaders are eager to embrace AI, but don’t yet have a sufficient level of trust in it. “Trust is the foundation. If we can’t trust these systems, ultimately we can’t use them,” Dr. Challis said.

Dr. Challis defined the three core challenges standing in the way of trusting AI: information security, a lack of specialized knowledge, and hallucinations.

UiPath is working hard to address these challenges. The new UiPath AI Trust Layer provides model transparency, admin controls, and usage auditing to assure users that their data is safe within UiPath applications.

There are a few ways to address a lack of specialized knowledge. First, you can give accurate prompts. “UiPath is super strong at getting that information into the LLM,” Dr. Challis said.

You can also fine-tune the models with specialized information. This has been expensive historically, but active learning is a new way to train models much more efficiently.

How active learning boosts efficiency

Essentially, active learning enables AI to propose its own algorithms rather than relying on manual data labeling. For instance, traditionally, training a model to identify a cat requires labeling cat images manually. However, with active learning, AI can self-learn to recognize a cat in an image and validate its identifications with human feedback, drastically reducing the manual data labeling bottleneck.

Replace cats with documents, and the enterprise value becomes apparent. Dr. Challis said that he views “active learning almost as a conversation between two colleagues. You only ask questions about the things you're uncertain of, you don't ask the same question again and again.”

AI still requires a human in the loop

As powerful as AI with active learning is, it still requires a human in the loop to ensure trust and accuracy. Palamara said that having a human in the loop is “one of the most important capabilities when it comes to making enterprise AI productive in businesses.”

While correcting errors is a critical task for the human in the loop, they also establish trust. That’s why we’ve created UiPath Action Center to act as a hub for humans to approve AI decisions and override them when necessary.

The road ahead for AI

Dr. Challis closed the session by asking Barber what he expects from AI in the next several years. Barber anticipates it to get better at logic and calculations, which, when paired with its existing capabilities, will be a “huge step forward for humanity.”

While AI has its fair share of issues, Dr. Challis reminded the audience that “we have the components to make this safe, to make this responsible, to make this governable. The responsibility is on us to build…the systems we want our businesses to be based on.”

Related read: The top 5 AI announcements from UiPath FORWARD VI

Get the best of FORWARD VI delivered right to your inbox when you register for 'The Best Bits'.

Content and Product Marketing Manager, UiPath

Get articles from automation experts in your inbox

SubscribeGet articles from automation experts in your inbox

Sign up today and we'll email you the newest articles every week.

Thank you for subscribing!

Thank you for subscribing! Each week, we'll send the best automation blog posts straight to your inbox.